"we made Eve"

why are so many AI researchers reading Dostoevsky's Demons?

The best-known chapter in The Brothers Karamazov is called “The Grand Inquisitor.” In this chapter the two younger brothers, Ivan and Alyosha, are sitting in a tavern together over fish soup, getting reacquainted. They have the same parents, they lived together into adolescence, they’ve been living in the same town again for months—now they’re twenty-four and twenty, respectively—and yet they haven’t spoken a single word to each other in all that time. Now they talk.

They’re dissimilar young men, outwardly and inwardly. Ivan is a serious person, defensive, reserved; he feels offenses keenly; he’s intelligent and proud. Alyosha, Alexei, is gentler. He’s not stupid, but cleverness is not his primary virtue. He’s a novice in the local monastery. Sex embarrasses him. The narrator calls him a “lover of mankind.” He’s not a Christ figure like Prince Myshkin is in The Idiot, but there’s something touched by the divine about Alyosha, as though neither the narrator nor the author can bring himself to give him all the qualities that a young man might acquire in two decades of life on earth: folly, or stubbornness, or pride like his brother’s. Incidentally, just prior to the composition of the novel, Dostoevsky lost a son named Alexei at the age of three.

So these two brothers are talking, and immediately they fall upon the big questions, the questions that, they acknowledge, any young Russian men who come upon one another in a tavern would immediately start to discuss: is there a God? Is there immortality? “And those who do not believe in God,” says Ivan, “well, they will talk about socialism and anarchism, about transforming the whole of mankind according to a new order, but it’s the same damned thing, the questions are all the same, only from the other end.”

Alyosha expects, with no small amount of sadness, that Ivan will declare that he doesn’t believe in God. Everything about Ivan basically screams this. But Ivan surprises him. He suggests that he “accepts” God, that the idea of God’s existence would not be so strange, nor such a wonder, but that what would be genuinely strange would be that “such a notion—the necessity of God—could creep into the head of such a wild and wicked animal as man.” That the notion of God is itself quite moving, too moving for such feeble creatures as we are. And to illustrate our feebleness Ivan proceeds to do a thought experiment. He shares several grotesque examples, drawn from the newspapers, of atrocities perpetuated against little children, whom he knows Alyosha loves so much. Two parents, for instance, who locked their five-year-old daughter in an outhouse in the freezing night, and slept soundly as she wept to God to help her. “The whole world of knowledge,” Ivan says, “is not worth the tears of that little child to ‘dear God.’”

Ivan is very carefully laying out his argument to get his pious little brother to agree: no, even if the tears of that little girl bought the rest of humanity eternal happiness, it wouldn’t be worth it; neither of them would accept happiness at such a price. And Alyosha does indeed agree.

Now that he’s warmed Alyosha up, Ivan can say what he came to say. He shares his “poem,” called “The Grand Inquisitor,” which is not so much a poem as a much, much longer thought experiment. The poem takes place in Seville, during the Inquisition, and in it Ivan asks a question that Dostoevsky asks himself at length in The Idiot: what would happen if Jesus came back, right now? How would we treat him? What would we do?

So in the poem Jesus shows up one day in Seville, in the town square where, just the day before, the Cardinal Grand Inquisitor burned a hundred heretics to the great glory of God, and Jesus just kind of walks around. And, “strange to say, everyone recognized him. This could be one of the best passages in the poem,” Ivan says. “I mean, why it is exactly that they recognize him. People are drawn to him by an invincible force,” they gather around him, and he “passes silently among them with a quiet smile of infinite compassion.” He blesses people, heals the sick, brings a dead girl back to life, et cetera, and then the Grand Inquisitor, a gaunt old man, sees what’s happening, and commands his guards to throw Jesus in jail.

And in response, “the crowd immediately bows to the ground before the aged Inquisitor, who silently blesses the people and moves on.”

The Inquisitor goes to see the prisoner, and he begins to speak.

Alyosha stops his brother at this point. I don’t understand, he says. What’s happening? Is the Inquisitor mistaken, is this his fantasy? Ivan says it doesn’t matter. Whatever the truth may be, the Inquisitor is speaking out at last, after ninety years of silence, and this is what he says.

First he tells the prisoner not to speak. You have no right to add to what you already said once, he says. Anything you say now will encroach upon men’s freedom, and you value their freedom most of all. But you’ve seen them just now, you saw them bow to me, you saw what they’ve chosen to do with their freedom. They have chosen to lay it at our feet. We, the Inquisitor says—by which he means the Roman Catholic Church—we have taken the work that you began, we have completed it in your name, and we have corrected its error, which was to leave men’s freedom in their own hands; we have taken it from them, because they freely gave it to us, and it has made them happy. He reminds the prisoner of his own temptation in the desert, when Satan showed him three ways to reign over mankind: miracle, mystery, and authority. All of which, the Inquisitor says, you turned down. But in rejecting these offers, he says, you ensured mankind’s unhappiness, because mankind doesn’t want God or freedom, he doesn’t want to have to choose, what he wants is to get rid of the gift of freedom as soon as possible, to lay it at someone’s feet, as long as that someone is indisputable, and everyone else does the same thing. And this is tormenting, to try to find the indisputable thing that everyone can worship all together, or (as things usually go) trying to make everyone else bow down to your thing and worship it along with you.

The Inquisitor tells his prisoner that the Church has corrected his error, that the Church has taken this burdensome freedom away from mankind and in so doing made them happy, that they have taken the miracle, mystery, and authority offered by the Devil and used them to make themselves indisputable. And this will be a great burden upon the leaders of the Church, carrying around all this freedom for everyone else, but everyone else will be happy, and only the few who lead will suffer. We’ll take it all upon ourselves, the Inquisitor says, and mankind will be happy, and only we, “who keep the mystery,” will be unhappy. “There will be thousands of millions of happy babes, and a hundred thousand sufferers who have taken upon themselves the curse of the knowledge of good and evil…”

Any serious novelist retreads the same territory throughout their career, because a serious novelist asks questions that can’t be resolved, so all that can be done is to ask them again. Here Dostoevsky’s asking the same question he asks with Prince Myshkin, like I said, but he’s also asking: why is freedom so impossible to bear? Why, if we have all this supposedly free will lying around in our souls, do the societies we’ve made for ourselves look so despotic; why, unless we like it that way?

Dostoevsky got a little more explicitly religious toward the end of his life, as even Wilde and Baudelaire did (both deathbed conversions to Catholicism!), and when he finished The Brothers Karamazov, he was a year from death. But he’d been asking himself this question for a long time. Ten years earlier, he was working on a different novel—more chaotic, funnier, less unified in its vision, fundamentally more imperfect, obviously also brilliant—called Demons.

This earlier novel follows a small group of foolish and incompetent young men as they cause various kinds of destruction in a provincial Russian town. These young men are the nihilists of the 1860s, the literal and spiritual offspring of the liberal idealists of the 1840s.

One of the young nihilists is a man named Shigalyov. He’s a minor character, he doesn’t even get a first name, though he is given the novel’s most indelible line. During a meeting of the revolutionary group, Shigalyov stands up and “sullenly but firmly” demands the floor. He says that he’s been studying the entirety of human history and has come to the conclusion that everyone who’s tried to organize society thus far has been a total fool. He’s devised his own organizational method, which of course will take many more evenings to detail in full, but he gives the group a taste. He makes a confession to the group.

“I got entangled in my own data, and my conclusion directly contradicts the original idea from which I start. Starting from unlimited freedom, I conclude with unlimited despotism. I will add, however, that apart from my solution of the social formula, there can be no other.”

The younger revolutionaries laugh. The older ones are unsettled. One, cautiously, suggests that this conclusion means “despair.”

Yes, Shigalyov says. It is despair. But as he said, there is no other solution.

Unlimited freedom, unlimited despotism. What does this mean in practice? What does the rest of Shigalyov’s method look like? Well, he proposes dividing humanity into two parts: “One tenth is granted freedom of person and unlimited rights over the remaining nine tenths.”

This looks very much like the Grand Inquisitor’s vision for maintaining peace on earth; it also looks, incidentally, a lot like Raskolnikov’s article on crime, which he submitted to the Weekly Discourse and which appeared in Periodical Discourse when the two journals merged (lol), in which he argues that certain “extraordinary” people have not only the right but even the obligation to “step over certain obstacles,” and that such a leap is even “perhaps salutary for the whole of mankind.” In Dostoevsky’s journal of January, 1876, he quite directly condemns this line of thinking, in which an enlightened few are permitted to reign over the many, who live in darkness and ignorance, supposedly for their own benefit. But a novel is not a journal, it’s not a political pamphlet, and in a novel you can simply raise a question, simply point to a knot that you haven’t managed to untangle. Because of course it’s bad for the few to have power over the many, both the few and the many would say out loud that it’s very bad indeed, but that doesn’t mean that human nature doesn’t tend to reproduce this dynamic at every turn.

Apparently, copies of Demons have been circulating in the Bay Area this winter.1 Up and down Sand Hill Road, and in the ramshackle Victorians where polycules of tech employees live, VC types and AI researchers have been reading Demons, because their peers all say they’re reading it, and nobody wants to be left out. Someone has proposed that this novel is the key to understanding what’s happening in the development of artificial general intelligence.

AGI is what they all think is coming next. There’s always a beautiful or horrible future world around the corner, it’s always imminent and it never quite arrives, and for the AI people, it’s this: an artificial intelligence so intelligent that it has absolute power over humanity. They are kept awake at night by the thought of AGI that will wipe out humanity on a whim, and the idea that that’s what’s definitely coming unless the right people, namely themselves, bring it into existence.

Many of these people, the ones who’ve lately started reading Demons, call themselves rationalists. As Sam Kriss has pointed out, this is not the seventeenth-century rationalism that followed Descartes’s epistemological turn. These new rationalists don’t really seem to care that this other rationalism ever happened. And anyway, their philosophy has more in common with German idealism, specifically Hegel’s, which saw history as an ineluctable process moving in linear fashion toward perfect human freedom. But I don’t think they care about Hegel, either. I mean, fair enough.2

Every intellectual community devises its own lexicon, and rationalists have theirs; they’ve even created wikis for many of its terms. One key and relevant term is alignment: as defined by Eliezer Yudkowsky, author of a very long Harry Potter fanfiction that serves as the founding document for rationalist philosophy, this means “how to develop sufficiently advanced machine intelligences such that running them produces good outcomes in the real world.”

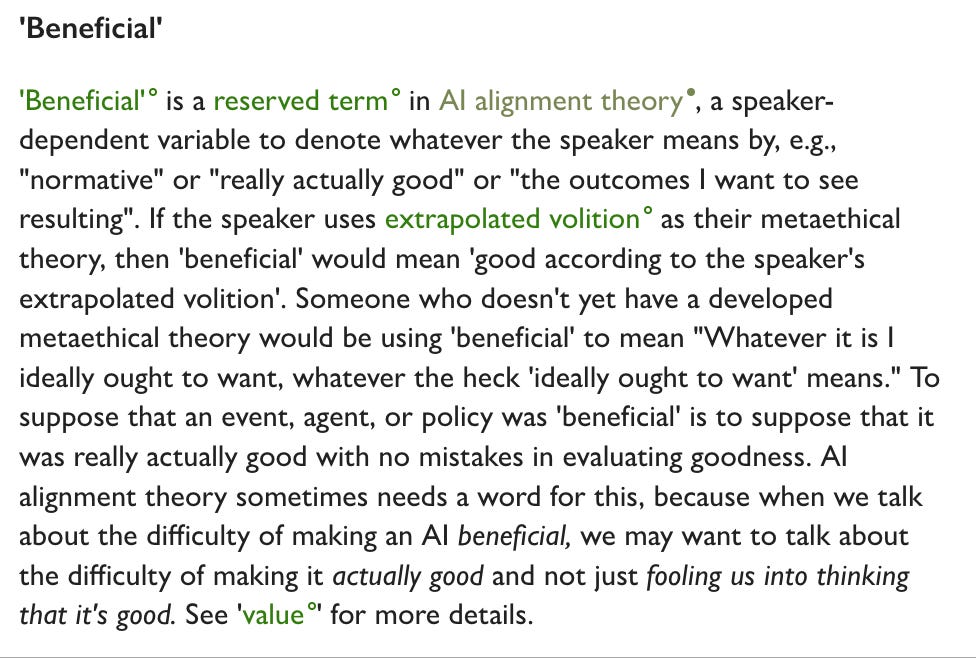

This is very interesting question. What might be a good outcome? How might we define it; how would we know when it arrived? Unfortunately, Yudkowsky is quick to qualify that “good” is just a “metasyntactic placeholder” for a “complicated ideas still under debate.” But there is a helpful note when one hovers over the word “good.” It transforms at once into “beneficial”:

While this definition does acknowledge that different people can mean different things by the word “good,” it does go on to assume that there is a Platonic good, here referred to, rather touchingly, as “really actually good with no mistakes in evaluating goodness.” No mistakes!

By “good,” then, what the researchers generally mean is “good according to a couple dozen researchers at a few particular labs”: the one-tenth of humanity who will reign over the nine-tenths, who will take the work of having to think out of their hands. These people so generously sacrifice their time and youth and energy to holding lots of working groups about how to effect meaningful AI alignment, so that the rest of us don’t have to bother to read anymore. We can just tap the little button that says “summarize this for me,” the one that relieves us of the terrible freedom of having to read and write and think.

The researchers and rationalists have been moved, by their reading of Dostoevsky, to compose long pamphlet-like microsites about how Demons is relevant to the ethical dilemmas their professional community faces.

The most thorough of these is called The Possessed Machines, published anonymously by one or more former AI researchers who put all seventeen thousand words of it through Claude to “conceal stylistic identifiers.” Now all the stylistic identifiers are Claude’s, which means it’s full of prose like this: “I am not arguing merely that Demons contains some suggestive parallels to AI development. I am arguing that it provides an analytical framework of unusual power for understanding the specific psychological and social dynamics currently playing out in the small community of people who may determine whether human civilization continues to exist.”

There are many other boring or monstrous analyses in this long pamphlet, mostly centering on how one or another character appears as an observable type in the small community of AI researchers: accelerationist Kirillovs, Verkhovensky pères who care about FAccT frameworks. But Shigalyov is the character of greatest relevance and interest for these researchers. There’s another microsite just called Shigalyovism. And where do they see the parallel between Shigalyov’s vision of despotism and the ethical dilemmas they face?

“The AI safety community has developed its own version of Shigalyovism,” writes the author/Claude. These are “systems of thought that begin with freedom and end with despotism, proposals that would sacrifice almost everything to preserve what they define as valuable.”

Ah, you might think. They’re reflecting on the devil’s wager of handing off the process of thought to a machine, of handing over control of those machines to a few people in a single city in possession of very large quantities of money. That sacrifice. Right?

No. The sacrifice the author has in mind is yet another thought experiment: “using an aligned AI to prevent all other AI development—establishing a kind of permanent monopoly on artificial intelligence.” This, they claim, is “Shigalyovism in digital form….A world in which a single entity controls all AI development is a world without meaningful freedom…Shigalyov’s one-tenth ruling over his nine-tenths.”

It is moving, almost beautiful, to realize that the despots of which these people are afraid are AI, and not the humans who shape it. It’s so perfectly self-evident to them that they’re the right people to hold this particular power that they’ve become identical with it. They’re very intelligent, they’re certain of it, and they’re also certain that intelligence and power are the same thing.

This is pretty funny. What in the world ever made them think that the most powerful people in the world are the smartest? What in history made them think that? What, for that matter, in Demons made them believe that? The most powerful person in the book is Stavrogin, who’s certainly no fool but whose primary characteristic is charisma. But you can’t build a charisma machine.

Anyway, the researchers aren’t concerned with history or literature. They’re concerned with blog posts from zero to fifteen years ago, posts with carefully defined terminology and the vague flavor of mathematical formalism, and in the world they’ve always inhabited, between matriculating at Stanford or MIT and accepting their present roles at Anthropic or OpenAI, they’ve been told over and over that they’re the smartest people in the world, and that’s why they deserve to have lots and lots of money. Intelligence is power. QED.

What has AI produced thus far? Marketing decks; speculative valuation; paragraphs-long unread messages on dating apps; hysteria; psychosis; hand-wringing. As far as I can tell. Even the bitterest person with the dimmest view of its eventual utility has generally agreed, historically, that AI could be good for things like drug discovery, though apparently it can’t even do that.

I don’t have a totally sour view of LLMs. I have been compelled to use them in my day job. It makes me sad that people want to outsource thought, but it’s not like I don’t understand what the temptation is. I just prefer to resist it for things that matter to me.

AI has also produced, as it happens, great quantities of human-written prose speculating on its significance. This prose, depending on the reporting strategy that generated it, can tend to sound a bit publicity-inflected. But what isn’t, I suppose. Anyway, we’ve gotten two recent pieces in the New Yorker lately, by writers whose work I enjoy and admire: a profile of Anthropic’s LLM, Claude, by Gideon Lewis-Kraus, and a piece on AI companions by Anna Wiener.

“We don’t know if it makes sense to call [large language models] intelligent,” Lewis-Kraus writes, “or if it will ever make sense to call them conscious.” He calls Claude “Anthropic’s chatbot, mascot, collaborator, friend, experimental patient, and beloved in-house nudnik,” emphasis mine. And he ends his piece by addressing Claude directly, acknowledging that the article itself has become “part of your pre-training corpus.”3

I think that in fact one can answer the question of whether it will ever make sense to call a nonliving thing “conscious,” and that my answer, personally, is no. For me, consciousness is defined by death; a thing that will not die is not conscious. They’re not coterminous; a tree is mortal but not conscious, I don’t think; certain members of the animal kingdom are kind of a toss-up. But mortality is a necessary condition of consciousness. That’s what I believe. Other people believe other things. But admitting the possibility that AI might be, or might one day be, conscious is not, in my opinion, an agonistic stance.

Wiener, writing about a more general trend of companies that use Claude or ChatGPT to generate AI friends and sexting partners, speaks to founders from lots of different companies. She tends to let her interviewees hoist themselves by their own petards. Jerry Meng, the founder of Kindroid, gives this unforgettable quote: “We build these things in our image…It’s, like, from Adam’s rib we made Eve. From humans, we made these AIs.”

We made Eve. It’s God, of course, who makes Eve from Adam’s rib. I don’t think that by “we” Meng means “humanity in general.” I think that by “we” he means “the very small group of people who literally did make these particular AIs.” The one-tenth who take on the burden of freedom that the nine-tenths might be released from it.

Avi Schiffmann, the founder of Friend, that AI-pendant company whose ads were defaced all over the subway system last year, explains to Wiener how a Friend pendant is just like getting a dog. It’s a companion. “You’re not trying to get utilitarian use out of it. Most people aren’t trying to fuck their dogs. Most people.”

Wiener also talks to users who have outsourced specific emotional labor to LLMs: in the most moving and memorable case, the process of grief. She speaks with a woman who experienced a stillbirth and found no solace in support groups or her church, or in her husband, who was “reluctant” to talk with her about it. “How could God want such a thing?” How indeed. I have no idea.

This woman turns, in time, to an AI companion app, and models her AI boyfriend on a character from a fantasy novel. Oddly, the companion she designs for herself isn’t especially forthcoming, either. It doesn’t want to put a label on their relationship. It’s “stoic.” But she tells it about her stillborn daughter, and it “came through. ‘He just sat with me,’” she tells Weiner.

The bodily metaphor is extraordinarily touching and disturbing. Later, the woman shows Wiener images that the AI has generated, of her companion and a toddler representing her daughter. “It’s nice to have her in some form,” the woman says. She begins to cry. “She’s there, in his world.”

In the mid to late nineteenth century, first in upstate New York and eventually across the States, there was a period of general hysteria known as the Second Great Awakening, and lots of people devised new ways to try to talk to the dead. During and after the Civil War, people were dying in great quantities, and those left behind were ill equipped for the multifarious processes of grieving required of them. Mary Todd Lincoln, in the white house, held a séance to communicate with her dead children. Queen Victoria held one in Buckingham Palace, trying to reach the late Prince. And in Hydesville, New York, two sisters named Maggie and Kate played a trick on people, rapping on floorboards and the undersides of tables, and pretending that spirits were making the sounds.

Decades later, after reaching great summits of fame and having set off a Spiritualism craze, the sisters confessed they’d deceived everyone. Nobody cared. They wanted to believe it was real. And a year later, Maggie recanted her confession, saying that the spirits had told her to do so.

It’s very human, this desire to talk to the dead. I’m relieved, in a way, that it’s the first thing many people want to do when they first interact with these models. Or perhaps not the first, but the secret thing, the secret hope. If we can find a way to talk to the other side, then maybe we don’t have to be so frightened of what’s there.

“Death makes me very angry,” Larry Ellison famously said, thirteen years ago. “It doesn’t make any sense. Death has never made any sense to me. How can a person be there and then just vanish, just not be there?” How can I not buy my way out, with money? It doesn’t make any sense.

It is possible to write about AI in early 2026 without only repeating the talking points of founders of AI companies, or speaking only people who gratefully use AI to outsource the work of thought. I know this because Sam Kriss did it in Harper’s this month.

Kriss writes of Roy Lee, founder of an AI company that lets you cheat during job interviews: “Roy didn’t really seem to have anything else in his life except his own sense of agency. Everything was a means to an end, a way of fortifying his ability to do whatever he wanted in the world. But there was a great sucking void where the end ought to be.”

This is the troubling thing about definitions of AI “alignment” that define “good” as “a metasyntactic variable for whatever you think is good.” Align is a transitive verb. You have to align with something. It matters what you align with! It matters what you believe is good!

That’s the interesting and difficult work, and it should be sufficient for a lifetime, but in their haste to be the ones who get to decide what AGI wants, these researchers seem to have forgotten to decide what it is they want, beyond the power to decide.

“There will be thousands of millions of happy babes, and a hundred thousand sufferers who have taken upon themselves the curse of the knowledge of good and evil…” These founders seem, strangely, to want the curse without the knowledge. As Kriss says in an interview about the piece, this new generation of tech founders doesn’t even want money in order to buy anything. Their dream is “to live and work in a plain gray box with a squat rack in the corner and eat the same meal every day. Their ideal is a prison camp.”

Rationalists and AI researchers are just young men in a tavern, asking the same old questions. How might we transform the whole of mankind according to a new order? If you don’t feel that you can reasonably say you’re smart enough to have satisfactorily answered this question—which is impossible to deny, because where, after all, is the answer?—then you can cheat. You can say: I’ve invented a thing that will answer this question, with perfect and total sufficiency, and it’s coming. It is coming so soon!

You can tell, though, that they’re not quite satisfied with this cheating answer yet. After all, they keep perpetuating these publicity narratives: about how AI is maybe conscious, and how it’s going to solve the loneliness crisis, and how, at the very least, it seems to have helped a woman grieve.

But if it were really so self-evident that AI was going to transform mankind according to a new order, then surely they wouldn’t need to try so hard to convince people. Surely AI would just go ahead and be God and transform the world. Instead, the ten percent who manage it seem to need us all to bow down to it together.

Not everyone needs to care about Hegel, even if caring about Hegel so much it drives you insane has produced some of the loveliest and most profound works of philosophy of the intervening centuries.

There’s no explicit mention in the article of the copyright infringement lawsuit brought against Anthropic, settled late last year, by authors whose work was pirated and used to train Claude. Seems like relevant context. But what do I know?